Further understanding human neuromuscular gait control using deep reinforcement learning

A gait model capable of generating human-like walking behavior at both the kinematic and the muscular level provides a powerful framework for developing state-of-the-art control schemes for humanoids and wearable robots (e.g. exoskeletons, prostheses). Developing such a model is challenging as it requires to capture the complexities of human neuromuscular gait control which is not well understood. In this work, we are aiming at demonstrating the feasibility of using a deep reinforcement learning based approach to derive the required sensory-motor mappings to generate human-like locomotion behaviors.

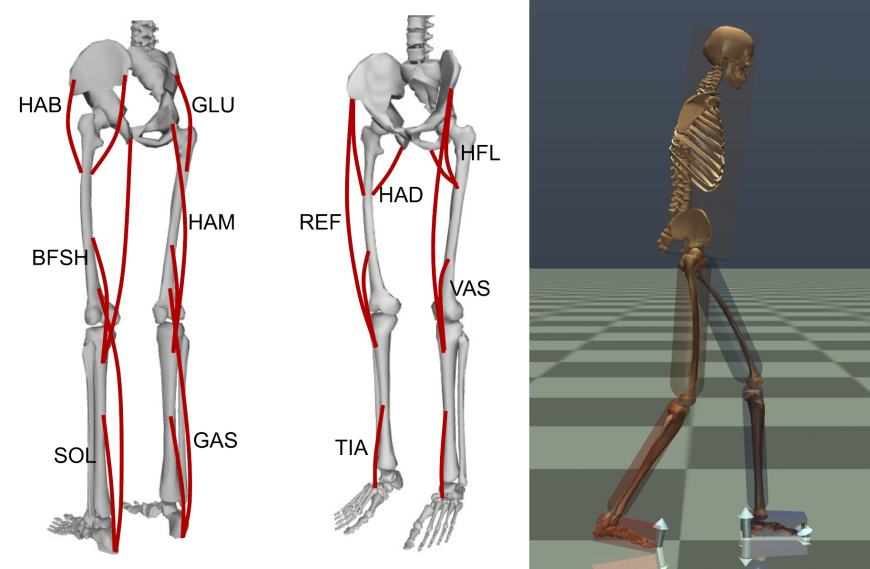

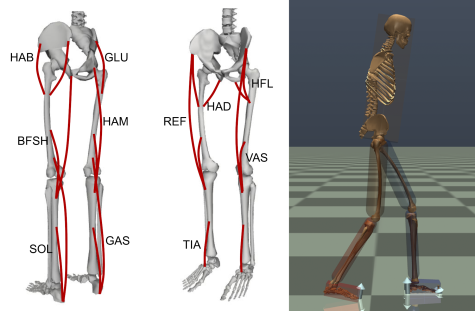

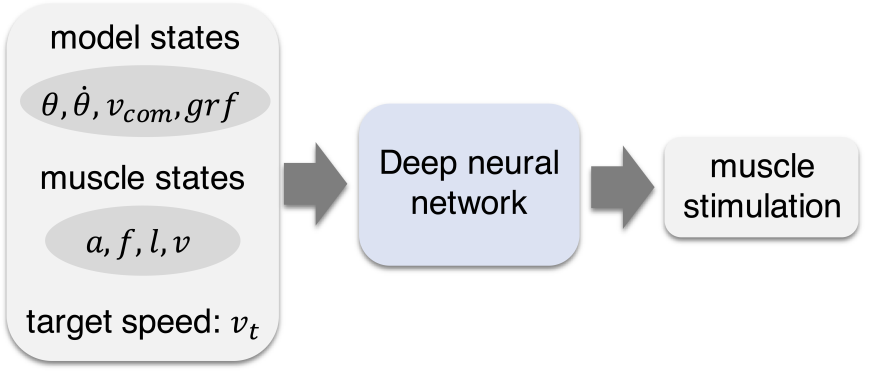

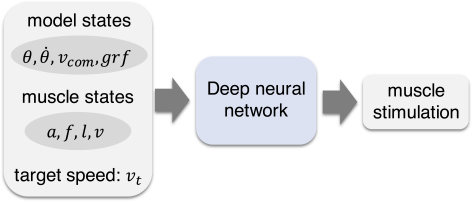

A lower limb gait model consists of seven segments, fourteen degrees of freedom, and twenty-two Hill-type muscles was built to capture human leg dynamics and the characteristics of muscle properties. We implemented the proximal policy optimization algorithm to learn the sensory-motor mappings (control policy) and generate human-like walking behavior for the model. Human motion capture data, muscle activation patterns and metabolic cost estimation were included in the reward function for training.

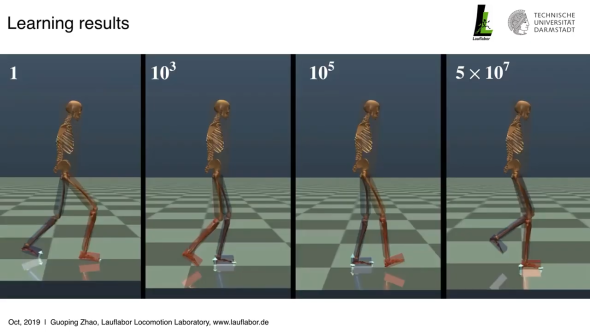

The results of our pilot study show that the model can closely reproduce human kinematics and ground reaction forces during walking. It is capable of generating human walking behavior in a speed range from 0.6m/s to 1.2m/s. It is also able to withstand unexpected hip torque perturbations during walking. We further explored the advantages of using the neuromuscular based model over the ideal joint torque based model. We observed that the neuromuscular model is more sample efficient compared to the torque model. Our future goal is to develop a deep-RL based individualized walking gait model that is robust and capable of reproducing human response to unexpected perturbations. With the learned model, we aim to identify optimal control schemes for human assistive devices (e.g. exoskeletons) and prostheses.

For further details please check our paper.

This work was partially supported by the DFG-funded EPA Project under Grant No. AH307/2-1 and Grant No. SE1042/29-1.

Contacts: