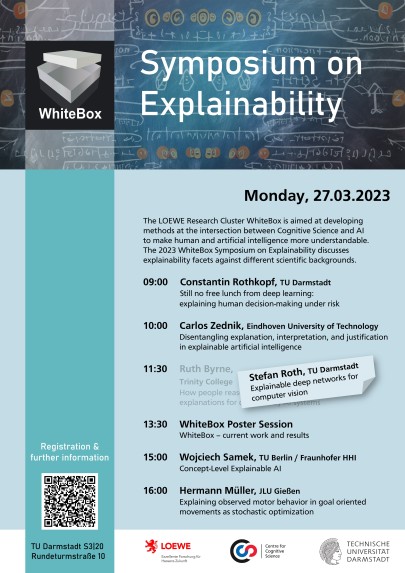

WhiteBox Symposium on Explainability

2023/03/28

As part of its first milestone, the LOEWE research cluster WhiteBox organised the “Symposium on Explainability”. The scientific program included a line-up of European experts who presented different facets of explainability, from philosophical foundations to understanding deep neural networks or human behavior and movement. The program was supplemented by a poster presentation and discussion of the WhiteBox project results to date.

Although the event unfortunately took place when most transport facilities (local, long-distance and air traffic) in Germany were on strike, around 50 participants joined the Symposium in presence.

Talks

- Constantin Rothkopf, Technical University of Darmstadt: Still no free lunch from deep learning: explaining human decision-making under risk

- Carlos Zednik, Eindhoven University of Technology: Disentangling explanation, interpretation, and justification in explainable artificial intelligence

- Stefan Roth, TU Darmstadt: Explainable deep networks for computer vision. *)

- Wojciech Samek, TU Berlin / Fraunhofer HHI: Concept-Level Explainable AI

- Hermann Müller, JLU Gießen: Explaining observed motor behavior in goal oriented movements as stochastic optimization

*) Due to the traffic strike Ruth Byrne (Trinity College Dublin) was unfortunately unable to present her talk on human explanations for AI decisions.

Symposium Impressions

Poster